Speaker

Description

The newly formed Data Reduction and Analysis group at Synchrotron SOLEIL is responsible for the development and implementation of data analysis software and methods for users of the facility. Rapid and efficient data analysis adds value to the users’ experience and efforts will be made by the group to provide simple and rapid tools for this purpose.

We aim to develop cloud-based infrastructure with on-demand remote desktop services, Jupyter notebooks, and micro-services for scientific computation and automatic processing. These services will be filled with scientific applications for synchrotron radiation data treatment, starting with e.g. MX and SAXS data reduction and analysis, tomography and ptychography reconstruction, fluorescence and absorption spectroscopy, photo-emission spectroscopy, and modelling tools (materials, beam-lines). As our workforce is still limited, we shall favour initially workflows gathering existing software solutions.

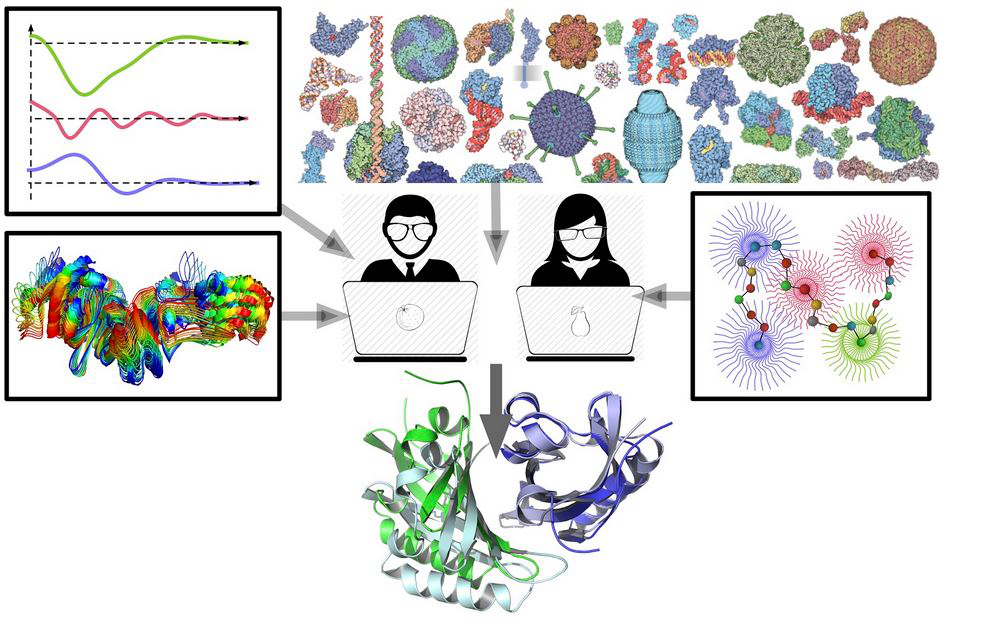

In the frame of integrated biology, our group will provide in addition a set of data treatment tools to serve the beam-line users for e.g. computational tasks, and data management workflows to merge multi-techniques and multi-scale data sets.